Feeling stuck on how to implement AI in your business? Start with this guide

Mar 27, 2026

Development

Reading time

10

mins

Implementing AI in your company means detecting your biggest workflow bottlenecks and integrating artificial intelligence to make them efficient — from automating repetitive tasks to connecting tools and making faster, data-backed decisions. It's the process of identifying where AI can create real value, building it into your operations, and scaling what works.

Most companies can point to at least one AI pilot they ran in the past two years. Fewer can point to the success they gained from them which is exactly what latest reports prove.

Around 88% of the organisations reported that they use AI in at least one business function, but the part that is less popular says that only 7% got tangible results from it. Now what does that tell you?

From what we’ve seen, the problem is that businesses invest in AI, before knowing exactly what it should improve.

Matthew Evans, who led AI initiatives at Airbus put it simply: “We don’t invest in AI. We don’t invest in natural language processing. We don’t invest in image analytics. We are always investing in a problem.”

Looking at the way they tried to implement AI over the past few years, you could see the similar pattern: starting with AI automation experiments using off-the shelf tools and then expanding it into several different workflows.

While on the surface it may seem that AI removes fewer repetitive headaches it also introduces new challenges like tool overload, unclear guidelines, etc, which ultimately leads the entire adoption process into a chaos, and in the worst case scenario - back to square one.

If you feel stuck and confused about where to even start with AI in your business, this guide will walk you through why a strategy matters before anything else, what steps to follow, how to spot the pitfalls before they cost you, and what successful AI implementation actually looks like in practice, drawing on practical insights from Profico's CTO Ivan Ferenčak and his experience across multiple AI transformations.

What is an AI implementation strategy and why does your business need one in the first place?

An AI implementation strategy is your structured blueprint that defines how a business will adopt, integrate, and scale artificial intelligence technologies into its operations, products, or services — in a way that aligns with its business goals, resources, and culture.

The first part of getting that strategy is doing the internal assessment. If done right, it surfaces three questions that tend to get avoided until they become expensive:

why AI belongs in your company at all,

where can it create business value

and how to integrate it without going into expensive system rebuilds.

Put those answers in a document and you have a clear starting point that tells you whether your company has the capacity for an AI transformation, whether the timing makes sense, and what success looks like beyond "the demo was impressive.”

A real AI implementation strategy keeps you off the road most companies end up on — a pile of disconnected tools, a climbing invoice, and a boardroom question nobody wants to answer.

It also does a few things no amount of enthusiasm can substitute for:

which use cases are worth pursuing before anyone starts building

what your data and infrastructure need to look like

It brings technical, operations, and business teams into the same conversation early enough to shape decisions

It defines success in real terms — so six months in, when someone asks "Is this working?", you have an answer that isn't a shrug.

Why AI implementation strategy matters for your business - what successful cases taught us

The difference between businesses that found their footing in AI transformation and those that didn't almost always traces back to the same place - remembering that technology itself doesn’t bring any value.

Our CTO Ivan Ferenčak has been in that room with businesses on both sides of that line.

What that experience consistently showed is that companies who know what to do with the technology and where to apply it adds value to the product or the workflow efficiency.

How Vinify approached it - and what happened as a result

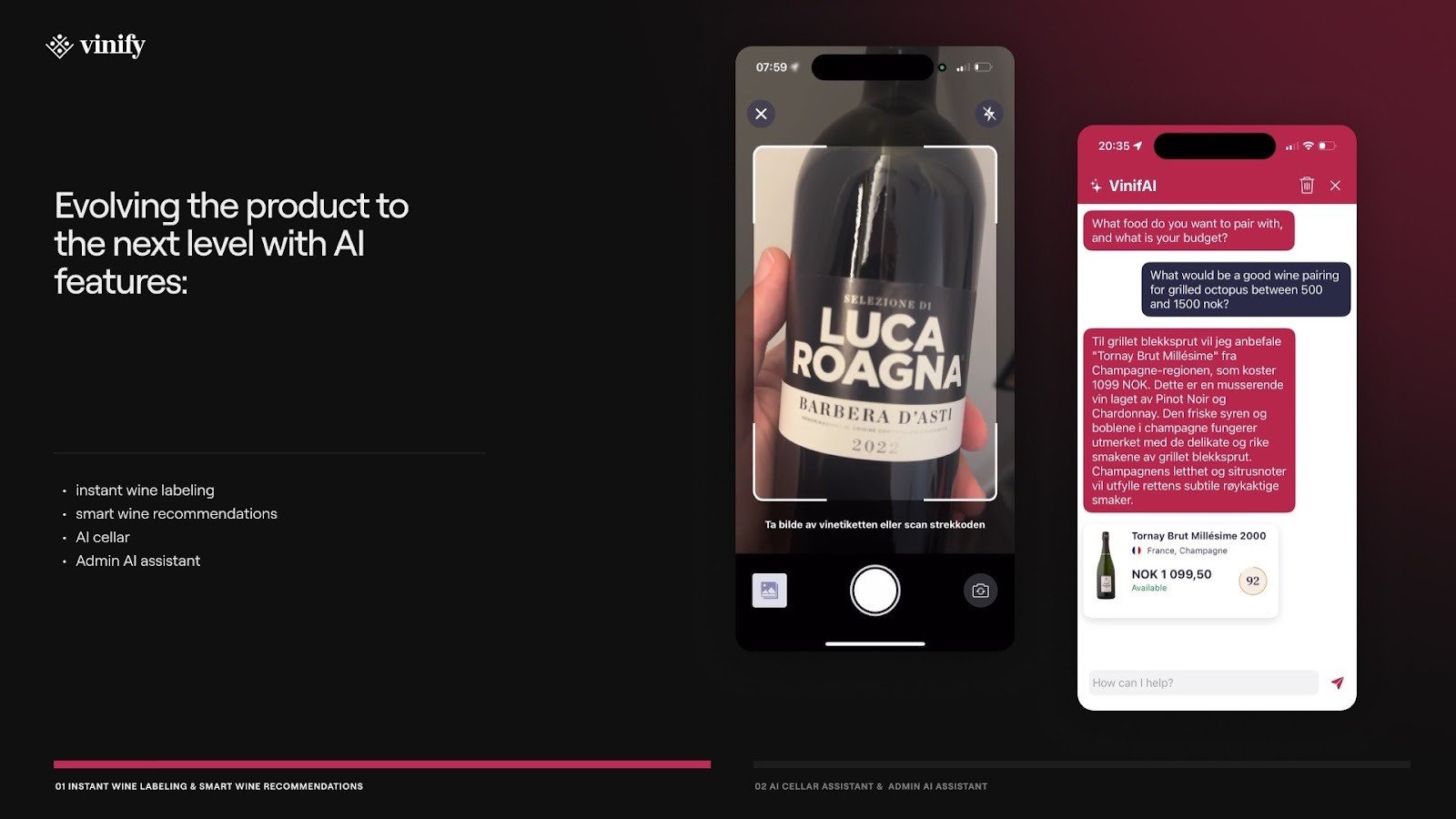

Our partner Vinify is a Norwegian wine management platform that added AI to its product in December 2024, only three years after the app's first release.

By the time AI entered the picture, their team had spent three years working directly with restaurants and other wine related businesses.

First, Vinify was a simple app for rating and saving wine at wine tastings.

After the first upgrade which included adding the virtual wine cellars, businesses started creating profiles and uploading their wine lists using the platform as a marketing channel.

Their team recognised the potential to turn Vinify into a powerful tool for wine businesses and, in close collaboration with Profico, built exactly that - an operating system with inventory management, sales analytics, and wine operations.

Years of working directly with restaurants and wine businesses had given the team an intimate understanding of how wine management actually operated at scale — where manual processes were slowing businesses down and where the platform had room to take on more of that operational weight.

This is the part where AI became the next logical solution to explore. The idea was not to add something flashy, but to use the technology and bring it into the places they mapped out precisely.

The result was five tools built for specific jobs. The “batch import” processed wine lists from spreadsheets, PDFs, or photos directly into a ready-to-use digital inventory.

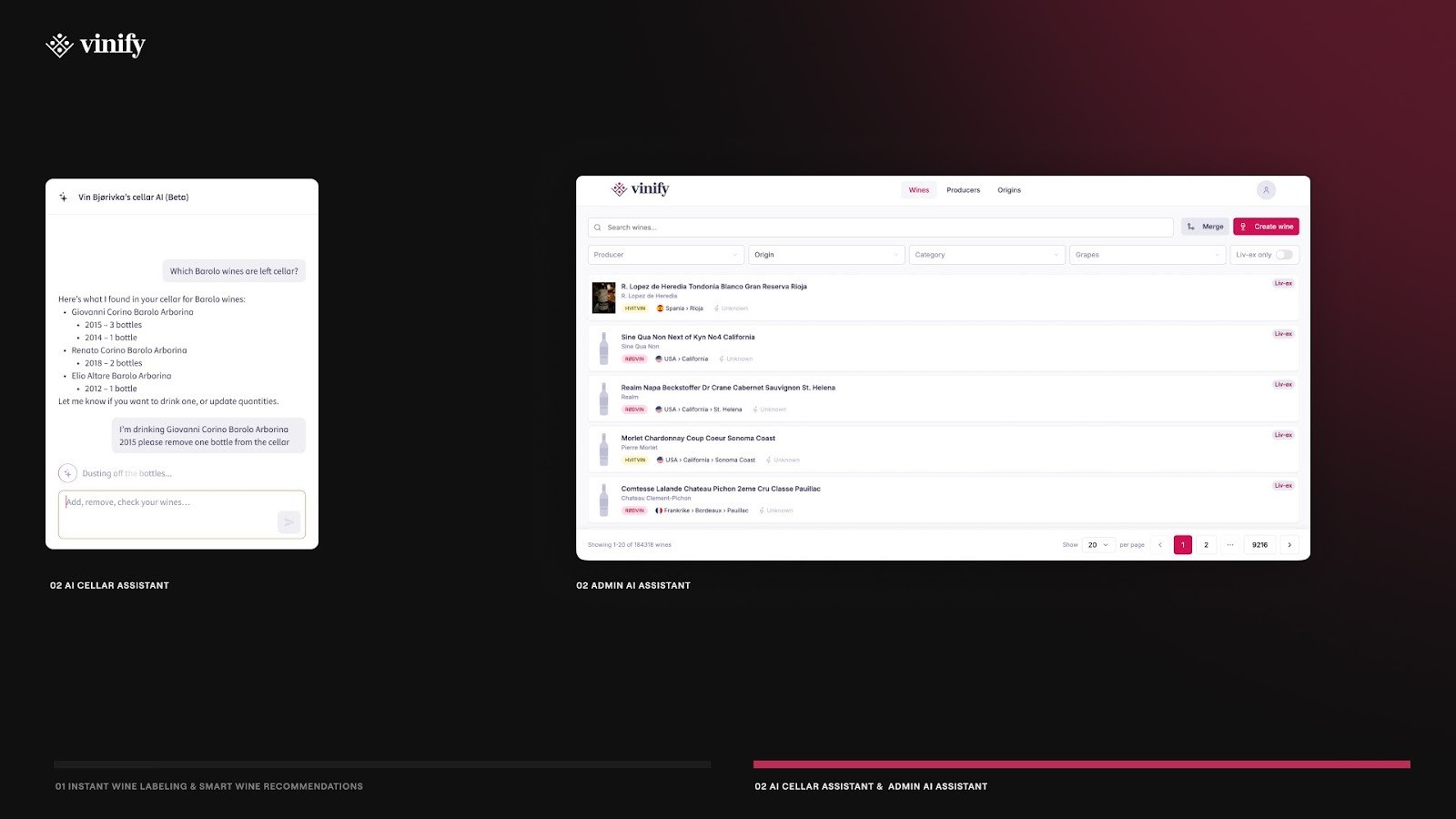

The cellar assistant lets users manage inventory through natural language commands.

The admin tool gave administrators full control over the wine database, matching uploaded sources with existing entries to keep everything accurate. For customers, an instant label scanner surfaced wine details, ratings and availability from a single photo.

And the sommelier recommendation chat — trained on three professional sommelier books — matched customers to the right wine based on their budget, preferences, region, and food pairing.

What their approach confirms about strategy:

The work comes before the technology. Three years of building alongside wine businesses gave the team a precise understanding of where the product could go further. The AI features were built for opportunities that were identified and well understood.

AI entered a product that had already earned its place. With 50,000 monthly users and 89 registered businesses, Vinify had a clear foundation to build on. The strategy determined where AI belonged before anyone decided what to build.

Every feature had a job before it was built. The batch import, the cellar assistant, the label scanner — each one traces back to a specific gap in how wine businesses actually operated.

3 assumptions you must challenge before you build your AI implementation strategy

When executives watch competitors announce AI initiatives, the instinct is to start one of your own so you don't fall behind without asking if that direction makes sense.

And in that rush AI transformation comes down to this: The strategy gets compressed into a timeline, the timeline gets compressed into a tool selection, and by the time the build starts, certain assumptions have already made themselves at home.

Here are the three you must evict so your strategy starts with a solid foundation.

"We don't really need to understand how it works — we just need to implement it"

Let’s start with the part that you should remember: Companies that saw real returns in 2025 spent between 50 and 70% of their AI budget on that foundational work before touching the model layer.

On the other hand most companies skip this part and treat AI deployment like software installation — hand it to engineering, connect it to existing systems, and expect output.

Translation: map the workflows you want AI to operate in, clean and structure the data the model will use and define what the success looks like once the model starts working.

"Once we roll this out, the whole team gets more productive"

Most rollout plans set one productivity target for the entire team and measure an average.

That average hides the fact that AI saves a lot of time on routine, well-defined tasks and very little on work that requires judgment, context, and experience — which is usually the work that matters most to the business.

"Shipping an AI feature will give us a competitive edge"

A classic trap where leaders within organisations think that technology works as a magic wand.

You must remember that customers don't pay for AI features. Employees don't change how they work because a tool has AI in it. They change when the output is reliable enough that trusting it costs less effort than verifying it.

Before Tomorro put a price on their AI feature that reads and processes contracts, they spent most of 2024 getting it accurate enough that users would stop double-checking every result.

At 50% accuracy, users verified everything — the feature was live but not changing how anyone actually worked. When they reached 95%, people stopped checking entirely.

Within two months, a quarter of existing customers were paying for it without any hard selling.

The accuracy threshold mattered more than the launch date. Ship before users can trust it and you teach them to treat it as unreliable — which is a harder perception to fix than simply waiting until it's ready.

How to implement AI in business when you're not sure where to begin: Use these proven 5 steps

Step 1: Understand what your business needs before AI can help it

We can’t stress this enough: start by mapping the work as it exists today — not how you wish it worked. Map every task, every decision point, every handoff between people or systems.

If you're building AI into your product, do the same from the user's side: what are they actually trying to get done, and at what point does the AI touch it.

Within that map, you're looking for where people execute repeatable steps versus where they make judgment calls. AI can reliably take over the first.

Once you have that clarity, write down what a good output looks like with real examples — that definition tells you which tool you need, and for product teams, whether the feature is ready to ship.

Challenges at this stage

Teams define the goal at too high a level: "automate customer support" or "add AI to our dashboard" tells you nothing about what the AI needs to do, when, and for whom.

The people who know the workflow best — the ones doing the work or using the product every day — are rarely in the room when these decisions get made.

Nobody agrees on what a good output looks like before the build starts, so there's no way to know if the tool is working until it's already causing problems.

Best practices

Involve the people doing the work in the mapping — not just leadership and engineering. For product teams, that means users.

Use the output definition as your tool filter: if a tool can't meet it, it's not the right tool.

Document what the workflow looks like today before changing anything, so you have a baseline to measure against.

Step 2: Know what data you have before you decide what AI can do

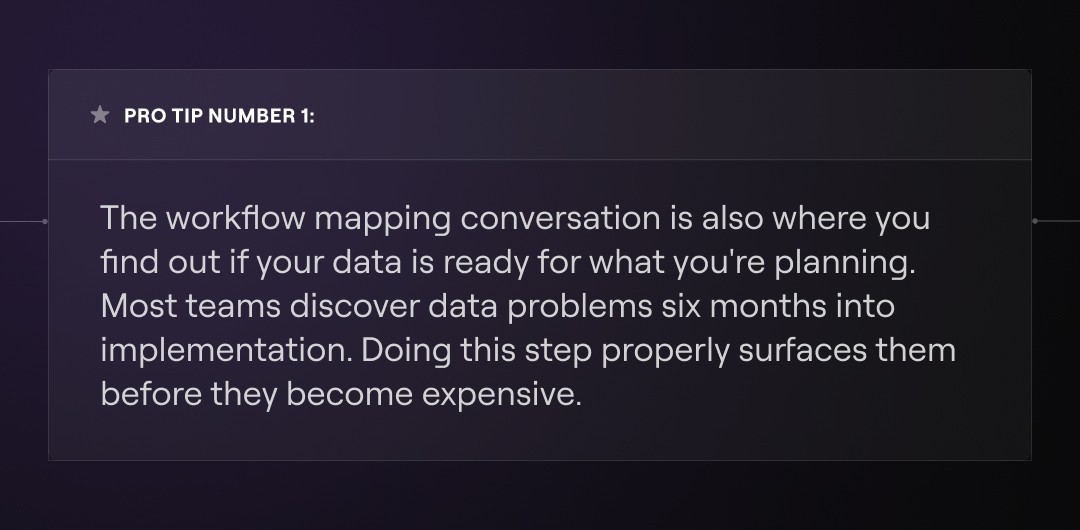

After completing the first step, track carefully where your data lives, who owns it, and whether it's consistent across systems.

For product teams, the question is what user behavioral signals you're actually capturing and whether they reflect what the AI needs to act on — a recommendation engine built on incomplete usage data will recommend the wrong things with full confidence.

Challenges at this stage

Companies assume historical data is sufficient without checking whether it reflects how the business actually operates today.

The data that exists is often owned by different teams with no shared standard, which makes it unreliable as model input without significant cleanup work.

For product teams, user behavioral data is frequently too thin or too broad to give the model anything meaningful to work with.

Best practices

Audit data quality and consistency before touching any tool — identify gaps, owners, and what needs to change for it to be usable.

Assign clear data ownership so quality doesn't degrade after launch.

For product teams, map what signals you're capturing from users and whether those signals are specific enough to support what the AI needs to do.

Step 3: Set a success metric for every use case, not one for everything

Before any deployment, define two metrics for each use case:

- a technical one - is the AI producing accurate outputs

- a business one - did that accuracy translate into something the company actually cares about.

For product teams, that means defining what user behavior change counts as success, not just whether the feature is being clicked.

A single company-wide productivity target averages out very different outcomes across tasks, teams, and use cases — and averages hide what's actually working and what isn't.

Challenges at this stage

Teams set technical metrics without connecting them to business outcomes - 95% model accuracy means nothing if it doesn't reduce the problem it was deployed to solve.

Success gets defined after deployment starts, which means there's no baseline to measure against.

For product teams, feature usage gets treated as success even when users are engaging out of curiosity rather than genuine need.

Best practices

Set both a technical and a business outcome metric for every use case and track them separately.

Capture your baseline before deployment so you have something real to measure against.

If the team can't agree on what good looks like for this use case, it's not ready to be deployed.

Step 4: Prove it works in one place before you scale it everywhere

Pick one high-value, well-defined use case where success is measurable and visible to the business. Get it working reliably, and let people see the results — adoption built on demonstrated proof travels further than any company-wide mandate.

For product teams, one AI feature that earns user trust gives you the behavioral data and confidence to expand.

Ten features shipped simultaneously makes it impossible to diagnose what's working and what isn't.

Challenges at this stage

Leadership pressure to show broad impact fast pushes teams to scale before the foundation is solid.

When multiple use cases run simultaneously, there's no clean signal on what's failing and why.

For product teams, shipping AI across multiple features before earning trust on any single one creates a perception problem that's hard to walk back.

Best practices

Choose a starting use case that is narrow enough to execute well and visible enough that success is obvious to the business.

Treat early adopters as your proof of concept — their behavior tells you more than any internal metric.

Step 5: Adoption doesn't scale without executive ownership

Somebody at the top needs to own adoption the same way they own revenue — with a metric, a timeline, and personal accountability for the outcome.

That means understanding the tools well enough to make informed decisions about them, tracking whether they're producing results, and removing the organizational blockers that slow adoption down.

Challenges at this stage

Executives approve the budget and delegate everything else to engineering or a transformation team, then measure progress through status updates rather than actual usage numbers.

AI fluency gets treated as something the team needs, not something leadership needs — which makes the whole initiative feel optional at every level below.

For product teams, when internal leadership hasn't adopted AI in their own workflows, the judgment required to make good decisions about AI features in the product simply isn't there.

Best practices

Assign a named executive owner for each AI initiative who is accountable for adoption outcomes, not just delivery milestones.

Make AI fluency a leadership KPI tracked the same way as any other business metric.

The executive sponsor should be able to demonstrate the tool, talk about where it works and where it doesn't, and use it visibly enough that the rest of the organization takes the signal.

Final take

According to McKinsey, only 6% of companies report meaningful financial impact from AI — and every single one of them had a structured implementation strategy behind it. Need guidance to get there? Let us help you. Contact us here.